In this tutorial, we will show you how to install Apache Spark on CentOS 8. For those of you who didn’t know, Apache Spark is a fast and general-purpose cluster computing system. It provides high-level APIs in Java, Scala, and Python, and also an optimized engine that supports overall execution charts. It also supports a rich set of higher-level tools including Spark SQL for SQL and structured information processing, MLlib for machine learning, GraphX for graph processing, and Spark Streaming.

This article assumes you have at least basic knowledge of Linux, know how to use the shell, and most importantly, you host your site on your own VPS. The installation is quite simple and assumes you are running in the root account, if not you may need to add ‘sudo‘ to the commands to get root privileges. I will show you the step-by-step installation of Apache Spark on CentOS 8.

Prerequisites

- A server running one of the following operating systems: CentOS 8.

- It’s recommended that you use a fresh OS install to prevent any potential issues.

- A

non-root sudo useror access to theroot user. We recommend acting as anon-root sudo user, however, as you can harm your system if you’re not careful when acting as the root.

Install Apache Spark on CentOS 8

Step 1. First, let’s start by ensuring your system is up-to-date and install all required dependencies.

sudo dnf install epel-release sudo dnf update

Step 2. Installing Java.

Java installation in this article has been covered in the previous article. We will refer to the Java installation article. Then we check out the Java version, by the command line below:

java -version

Step 3. Installing Scala.

Apache Spark is implemented on Scala programming language, so we have to install Scala for running Apache Spark, so we just need to make sure that Java and Python are present:

wget https://www.scala-lang.org/files/archive/scala-2.13.4.tgz tar xvf scala-2.13.4.tgz sudo mv scala-2.13.4 /usr/lib sudo ln -s /usr/lib/scala-2.13.4 /usr/lib/scala export PATH=$PATH:/usr/lib/scala/bin

Once installed, check the scala version:

scala -version

Step 4. Installing Apache Spark on CentOS 8.

Now we download the latest version of Apache Spark from its official source:

wget https://downloads.apache.org/spark/spark-3.0.1/spark-3.0.1-bin-hadoop2.7.tgz tar -xzf spark-3.0.1-bin-hadoop2.7.tgz export SPARK_HOME=$HOME/spark-3.0.1-bin-hadoop2.7 export PATH=$PATH:$SPARK_HOME/bin

Setup some Environment variables before you start spark:

echo 'export PATH=$PATH:/usr/lib/scala/bin' >> .bash_profile echo 'export SPARK_HOME=$HOME/spark-3.0.1-bin-hadoop2.7' >> .bash_profile echo 'export PATH=$PATH:$SPARK_HOME/bin' >> .bash_profile

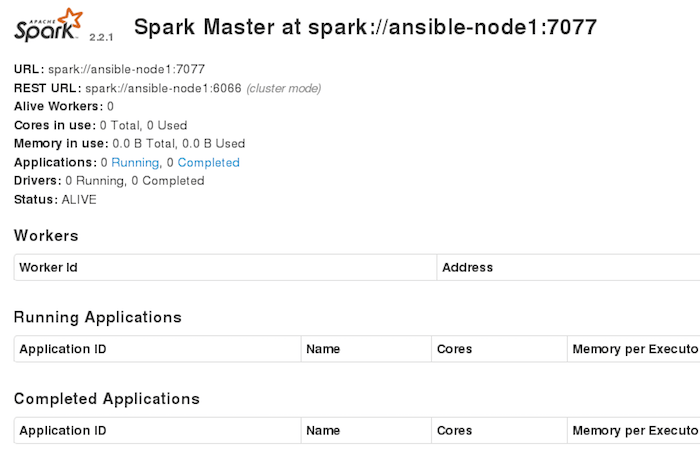

The standalone Spark cluster can be started manually i.e. executing the start script on each node, or simply using the available launch scripts. For testing we can run master and slave daemons on the same machine:

./sbin/start-master.sh

Step 5. Configure Firewall for Apache Spark.

Run the following command to open the port on the firewall:

sudo firewall-cmd --permanent --zone=public --add-port=7077/tcp sudo firewall-cmd --reload

Step 6. Accessing Apache Spark Web Interface.

Apache Spark will be available on HTTP port 7077 by default. Open your favorite browser and navigate to http://your-domain.com:7077 or http://server-ip-address:7077 and complete the required steps to finish the installation.

Congratulations! You have successfully installed Apache Spark. Thanks for using this tutorial for installing Apache Spark open-source framework on your CentOS 8 system. For additional help or useful information, we recommend you check the official Apache Spark website.

[su_box title=”VPS Manage Service Offer” style=”bubbles” box_color=”#000000″ radius=”10″]If you don’t have time to do all of this stuff, or if this is not your area of expertise, we offer a service to do “VPS Manage Service Offer”, starting from $10 (Paypal payment). Please contact us to get the best deal![/su_box]